As AI becomes a constant companion in college writing, a new tool called DraftMarks makes the hidden collaboration between humans and machines visible on the page. The open-source system could help students, teachers and readers rethink what authentic writing and learning look like in an AI era.

Generative artificial intelligence has quietly become a co-author in college classrooms. Now, a new tool aims to make that partnership visible.

Researchers from Georgia Tech and Stanford have developed DraftMarks, an open-source system that shows exactly how AI shapes a piece of writing as it evolves. Instead of trying to catch students using AI, the tool is designed to help everyone understand how humans and machines are working together.

The shift comes as AI becomes a routine part of coursework. A recent trend report on AI in education found that 90% of college students use AI in their classes, with nearly half turning to it during the drafting process. With tools like ChatGPT woven into everyday writing, traditional plagiarism detectors and grammar checkers are no longer enough to show what students are actually learning.

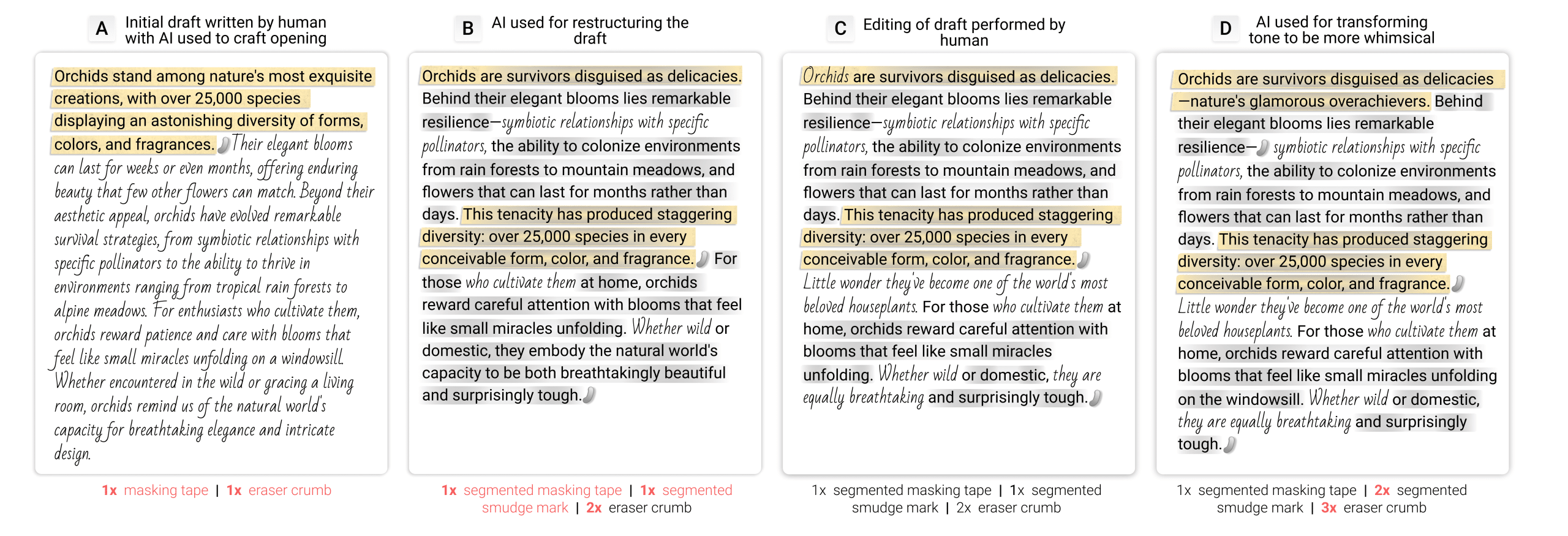

DraftMarks takes a different approach. It does not spit out a percentage of AI-written text. Instead, it layers visual cues directly onto a document to reveal the writing process itself: where a student prompted AI, where they accepted or rejected suggestions, and how the argument and wording changed over time.

The system works like an augmented reading layer. As a writer drafts with AI, DraftMarks tracks the document’s history and classifies different types of edits and interactions. It then overlays familiar-looking marks on the text.

Eraser crumbs mark passages that have been heavily revised. Smudges flag AI-generated changes that alter the strength of an argument rather than just the surface wording. Masking tape highlights sections that were initially generated by AI. Glue residue shows where AI-written text was later removed. Ghost text indicates places where a writer asked an AI system for help but ultimately chose not to use the output. Different fonts distinguish between human-written and AI-generated passages.

Caption: How DraftMarks works

Credit: Georgia Tech

Together, these marks turn an invisible back-and-forth into something readers can see at a glance. They tell a story about how a piece of writing came to be.

For lead author Momin Siddiqui, a master’s student in Georgia Tech’s College of Computing, that visibility is the point.

“By making the invisible parts of the process tangible, it forces writers to confront whether they are truly engaging with AI or just passively accepting it,” Siddiqui said in a news release. “Ultimately, it helps writers make more intentional judgment calls about how they want to collaborate with AI in the future.”

Unlike AI detectors that were built to police cheating, DraftMarks was designed from the ground up with educators in mind. Before building the tool, the research team conducted an initial study with 21 instructors to observe how they review student writing and what they look for when assessing learning, revision and originality.

Those insights shaped DraftMarks’ visual language. The marks deliberately mimic physical traces of analog writing — eraser debris, tape, smudges — so that teachers can interpret them using cues they already understand from years of grading paper drafts.

“These marks are meant to emulate the writing process in ways we’re already familiar with,” added Adam Coscia, a computing doctoral student at Georgia Tech. “They help students and teachers see the effort behind the writing, and whether students actually met the learning objective.”

Behind the scenes, DraftMarks continuously records the draft history and classifies edits and AI interactions as they happen, allowing the visual cues to appear almost in real time. That means an instructor can open a student’s essay and not only see the final product, but also how it was constructed.

To test how the tool works outside the lab, the team ran a follow-up study with 70 participants, including students, teachers, journalists and general readers. Each person reviewed a document annotated with DraftMarks and reflected on what they learned from the marks.

Instructors gravitated toward the process view. They used the marks to see how ideas developed, how heavily AI was involved and where students exercised judgment or pushed back against AI suggestions. That kind of insight could help teachers distinguish between a student who relies on AI as a brainstorming partner and one who outsources most of the thinking.

General readers, by contrast, focused on trust. They used the marks to gauge authorial intent and authenticity — how much of the piece reflected a human voice and how much was shaped by AI. For them, DraftMarks became a tool for deciding how much confidence to place in what they were reading.

Coscia noted that using DraftMarks on his own writing changed his perspective.

“DraftMarks completely changed how I think about my own writing,” Coscia said. “I was surprised by how much I cared about authorial intent once I could actually see how AI affected my tone. It made me realize small AI choices can subtly reshape what I’m trying to say.”

That kind of reflection is exactly what the researchers hope to encourage. Rather than treating AI as something to be hidden or detected, DraftMarks invites students to think critically about when AI helps them learn and when it might be doing too much of the work.

The tool also offers a possible path forward for educators who feel caught between banning AI and ignoring it. With a clearer window into the writing process, instructors could design assignments that explicitly incorporate AI, then use DraftMarks to see how students are engaging with those tools and whether they are still practicing key skills.

More broadly, the project reflects a growing push in education and journalism to move from detection to transparency. As AI continues to reshape how writing happens — from first-year composition classes to newsrooms and corporate communications — tools like DraftMarks could help keep human judgment at the center.

For now, the system is a research prototype, but its creators envision it as part of a new generation of open-source tools that make AI’s role in writing visible rather than mysterious. In an era when almost any sentence might have been touched by an algorithm, they argue, understanding how that collaboration works may matter as much as the words on the page.

Source: Georgia Institute of Technology