Dartmouth researchers have created a way to turn short multiple-choice quizzes into detailed maps of what students really know. The AI-powered framework could help teachers and future tutoring systems personalize learning at scale.

A new tool from Dartmouth College promises to turn the humble quiz into something far more powerful: a detailed map of what a student truly understands and where they are getting lost.

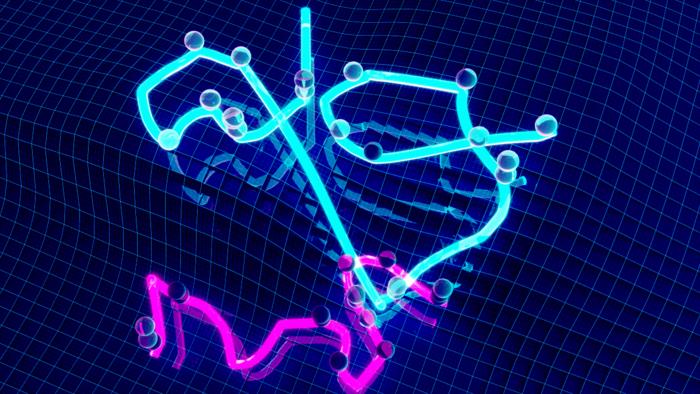

Instead of treating a quiz score as a simple percentage, the Dartmouth framework uses artificial intelligence to build a kind of knowledge landscape for each learner. Peaks on the map represent concepts a student has mastered; valleys mark areas where they are struggling. The work, published in the journal Nature Communications, could help teachers tailor instruction and fuel a new generation of personalized AI tutors.

Caption: A 3D map from a new study reporting a framework for capturing students’ conceptual knowledge.

Credit: Courtesy of Jeremy Manning and Paxton Fitzpatrick

The work starts from a basic problem in education: traditional tests are blunt instruments.

“When a student scores 50% on a quiz, that number conveys little about what they actually understand,” senior author Jeremy Manning, an associate professor of psychological and brain sciences at Dartmouth, said in a news release. “They may have understood half of the material perfectly, or understood all of it only partially, or anywhere in-between.”

The new framework aims to reveal those hidden differences by modeling how ideas connect to one another.

To do that, the researchers borrowed a tool from modern AI known as text embedding. These models, which also underpin many large language systems, can represent words or concepts as coordinates in a high-dimensional space. In that space, related ideas sit close together, while unrelated ones are far apart.

For example, gravity and magnetism would appear near each other, while genetics and art history would be distant. By assigning each quiz question a location in this conceptual space, the Dartmouth team could infer how a student’s knowledge in one area might extend to nearby topics.

Lead author Paxton Fitzpatrick, a doctoral candidate in Manning’s group, explains that this is similar to what good teachers already do when they review an exam with a student.

“When a student seeks help after struggling on an exam, providing them with individualized feedback or guidance requires examining their performance on different questions to better understand what concepts they have and haven’t mastered,” Fitzpatrick said in the news release. “While that’s traditionally the role of a teacher or tutor, the growth of online and remote learning means that sort of personalized instruction isn’t always available to every student.”

The Dartmouth framework tries to automate that kind of expert judgment. It assumes that knowledge is not random but tends to vary smoothly across related ideas.

“Our approach leverages the intuition that people’s knowledge tends to vary gradually across related ideas—that knowing a lot about one concept suggests you’re more likely, though not guaranteed, to also know something about related concepts,” Manning added.

Using this assumption, the system can take a relatively small number of quiz responses and estimate a student’s understanding across a much wider range of concepts. In other words, it can fill in parts of the map that were never directly tested.

The researchers tested their method with 50 Dartmouth undergraduates who watched online physics lectures from Khan Academy, a nonprofit educational platform. Students took short multiple-choice quizzes before and after the lectures. From those answers, the team built individual knowledge maps and then used the maps to predict which questions each student would answer correctly.

According to the study, the maps not only tracked how students’ understanding changed after the lectures but also reliably forecast their performance on specific quiz items. That suggests the underlying structure captured by the text embedding models is closely aligned with how students actually organize concepts in their minds.

Manning emphasized the project was driven by a deeper scientific question about how knowledge is structured.

“Our goal wasn’t just to build better quizzes or grading methods,” he said. “We wanted to test a theoretical idea, that knowledge is structured in the particular way implied by text embedding models, and that this structure shapes what people are likely to know and how they acquire new knowledge.”

Co-author Andrew Heusser, who worked on the project as a postdoctoral researcher in Manning’s lab, sees the tool as a mathematical version of what expert instructors already do intuitively. When a student struggles with a new idea, a skilled teacher will often reframe it using examples or concepts the student already understands. The Dartmouth framework formalizes that process by explicitly mapping how concepts relate to one another and to a student’s current understanding.

In practice, such maps could give instructors a fast, visual way to see where a class is thriving and where they need more support. Instead of just knowing that many students missed question 7, a teacher could see that the underlying issue is, for example, a cluster of related ideas in mechanics or energy.

The team also sees potential beyond the classroom. Detailed knowledge maps could help power AI tutoring systems that adapt in real time, choosing explanations, examples or practice problems that connect new material to what a student already knows. That kind of personalization is difficult to provide at scale with human instructors alone.

At the same time, the researchers stress that AI should be viewed as a supplement, not a substitute, for human teachers. They argue that in small classes or one-on-one settings, human educators can still understand and motivate students in ways that machines cannot. The promise of tools like this one, they suggest, is to extend some of the benefits of personalized teaching to many more learners.

To let the public experiment with the idea, the team has released an online demo of their framework. Users can answer questions to generate an interactive map of their own knowledge, see predicted strengths and weaknesses, and explore recommended learning materials to fill gaps or extend their understanding.

As education continues to move online and AI systems become more common in learning environments, tools that can see beyond raw scores to the structure of a student’s knowledge may become increasingly important. The Dartmouth work points toward a future where even a short quiz can open a detailed window into how each student thinks — and how best to help them grow.

Source: Dartmouth College