A team of researchers from Stanford University and University of California, San Diego have developed a brand new kind of camera with robotics in mind. This new camera will remove a key challenge robotics engineers face today in terms of capturing images. Digital cameras, even the top-of-the-line models, are not well-suited for capturing the wide field of view a robot needs to move around in a given space, such as in a self-driving car.

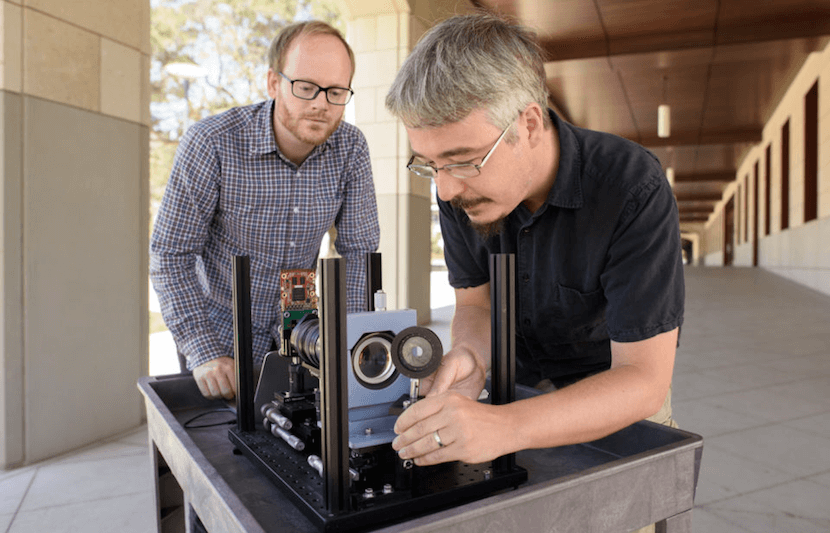

The University Network (TUN) spoke with Donald Dansereau, a postdoctoral fellow at Stanford and first author on this project, to get his take on the new proof-of-concept camera.

Dansereau drew parallels to the eyes of living organisms when he described the camera to The University Network (TUN).

“Like the human eye, the camera captures a curved image to offer a wide field of view: its 140-degree view is roughly in line with human vision,” he said. “For depth perception, it’s more like an insect’s compound eye, using many smaller views of the world to make sense of depth, shape, and higher order effects like reflections and transparency. This is done with an array of hundreds of thousands of tiny lenslets, packed together in honeycomb fashion, which turns the camera into a light field camera.”

A light field camera, also referred to as a “4D” camera, captures an image as well as information about the light hitting the camera. This allows the user of the camera to adjust the focus of an image after it has been taken.

“Having a wide field of view simplifies a lot of jobs in robotics, and light field capture simplifies tasks where 3D scene motion would normally complicate perception,” Dansereau explained to TUN. “Taken together, this camera is a great fit for jobs requiring close-up interaction with complex scenes, on a tight time and power budget. Drones landing, delivery robots, self-driving cars watching out for pedestrians, cyclists and other nearby cars, these kinds of autonomy are much easier with a wide-FOV light field camera.”

Dansereau believes that this camera will have an impact on the robotics field in the future. “In 10-15 years, it seems possible that more cameras will be manufactured for robots than for humans, and it makes a lot of sense to ask ‘what’s the best camera for a given robot?’” he told TUN. “I believe light field cameras and other computational imaging technologies will allow us to tailor cameras to specific robotics applications, allowing greater levels of autonomy and reliability even in challenging conditions.”

The uses of this technology, however, extend beyond robotics. Dansereau and his research team see great viability for 4D cameras in augmented and virtual reality. “This camera’s wide field of view and rich light field information simplify tracking of camera motion and segmentation and tracking of hands, people, and other moving objects in the environment,” Dansereau told TUN. “In augmented reality, these are crucial capabilities to enable the next generation of real-time interaction that includes integration of content with the user’s environment. In virtual reality, light field capture enables the user to focus at different depths in the scene. It also photo-realistically captures higher-order optical phenomena like specularities and transparency. This kind of native wide-FOV light field capture makes a lot of sense for cinematic VR content creation.”

The team plans on creating a compact prototype camera next, which they hope would be small enough and light enough to test on a robot. They also have plans to develop a wearable camera after that.

The full paper is available here.

The research team also includes Gordon Wetzstein, assistant professor of electrical engineering at Stanford, Joseph Ford, professor of computer/electrical engineering at University of California, San Diego, and Glenn Schuster, graduate student researcher at University of California, San Diego.