In a groundbreaking new project, MIT researchers have developed a computerized system that uses artificial intelligence (AI) to see people through walls.

“RF-Pose,” as they have dubbed the technology, functions as real-life X-ray vision.

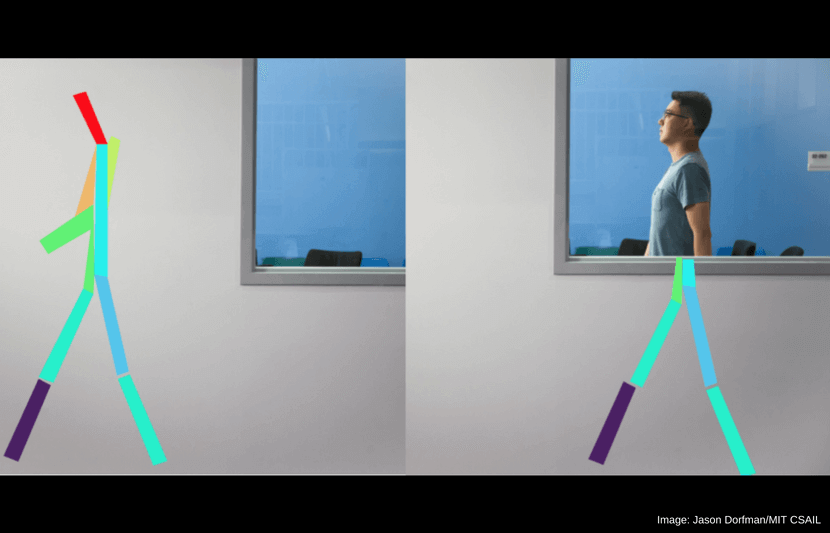

The technology uses a neural network to analyze radio frequencies that reverberate off people’s bodies. This allows the system to detect people’s postures and movement in real time, even from behind walls or in the dark. RF-Pose then creates a two-dimensional stick figure that moves as the person does.

So, what inspired the team to develop the technology?

“Estimating the human pose is an important task in computer vision with applications in surveillance, activity recognition, gaming, etc.,” said Dina Katabi, the Andrew & Erna Viterbi Professor of Electrical Engineering and Computer Science at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL), who led the research.

The research was presented on June 21 at the Conference on Computer Vision and Pattern Recognition (CVPR) in Salt Lake City, Utah.

How does the technology work?

AI learns by example. That is, by showing a neural network a large data set of items, it will learn to identify certain trends in the data set.

For RF-Pose, the researchers had to teach AI to associate particular radio signals with specific human actions.

To do so, they collected thousands of images of people doing activities like walking, talking, standing, sitting, opening doors, opening elevators, and more.

They used these images to create stick figures, posing just as the people in the images did.

They paired these stick figure poses with corresponding radio signals and showed them to the AI.

“This combination of examples enabled the system to learn the association between the radio signal and the stick figures of the people in the scene,” Katabi said.

“Post-training, RF-Pose was able to estimate a person’s posture and movements without cameras, using only the wireless reflections that bounce off people’s bodies.”

RF-Pose was never explicitly trained to identify people’s actions through walls. But, because it is trained to identify people’s movement based on radio signals, barriers don’t affect the way it can detect movement.

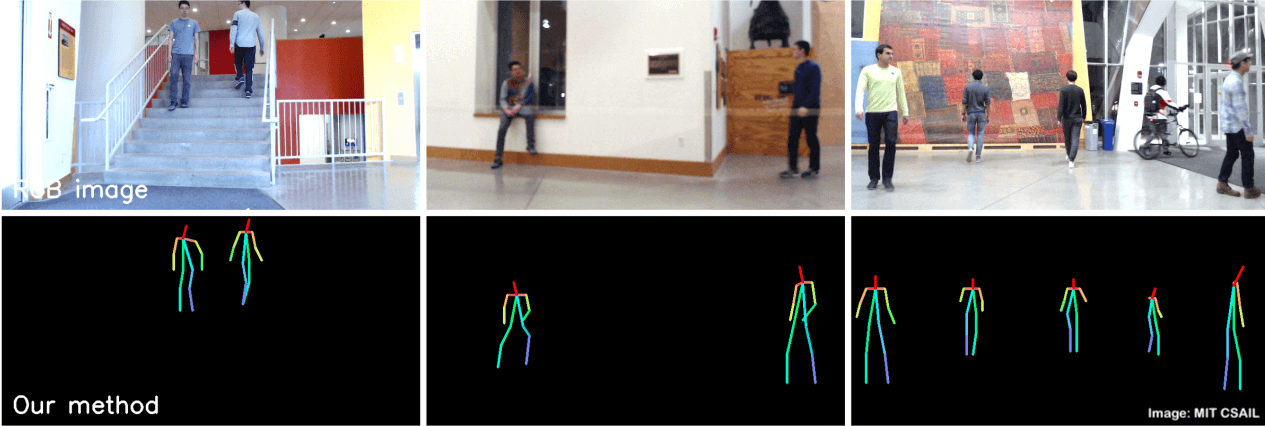

In the video below, RF-Pose was shown monitoring the movement of people through walls. It is capable of tracking the movements of multiple people at one time.

The researchers also found that the technology can use radio signals to identify specific individuals. In experiments, it was able to identify one person out of a lineup of 100 with 83 percent accuracy.

To mitigate privacy and consent concerns, the technology can be encoded with a “consent mechanism” that would require the system’s user to initiate RF-Pose with a series of physical cues.

Real-world applications

The technology has unlimited potential applications, but the researchers particularly highlighted possible medical uses.

They believe that it could be used to monitor a range of diseases, including Parkinson’s, multiple sclerosis (MS), and muscular dystrophy by enabling doctors to observe disease progression.

It could also be used to assist elderly people by monitoring their actions and watching for falls or injuries.

“We’ve seen that monitoring patients’ walking speed and ability to do basic activities on their own gives health care providers a window into their lives that they didn’t have before,” Katabi said in a statement.

“A key advantage of our approach is that patients do not have to wear sensors or remember to charge their devices.”

The team is already working with doctors to see how the system can be applied.

In addition, the team believes the technology could be utilized to create video games in which players move between different rooms. It could even be utilized in policing or in search-and-rescue missions to help locate survivors.

Moving forward, the researchers will continue to modify the technology to make it better equipped for real-world applications.

“For this paper the model only outputs a 2-D skeleton, but the team is also working to create 3D representations that would be able to reflect even smaller micromovements,” said Katabi.

“For example, it might be able to see if an older person’s hands are shaking regularly enough that they may want to get a check-up.”