A new radar “tag” called EyeDAR could give self-driving cars an extra set of eyes by turning streetlights and stop signs into smart, talking sensors. Rice University engineers say the low-power devices may help autonomous vehicles see around corners, through bad weather and beyond their own blind spots.

Self-driving cars may soon get help from the road itself.

Engineers at Rice University, led by postdoctoral researcher Kun Woo Cho, have developed a low-power radar sensor called EyeDAR that can be mounted on streetlights, traffic signals and other roadside fixtures to give autonomous vehicles a clearer, wider view of their surroundings.

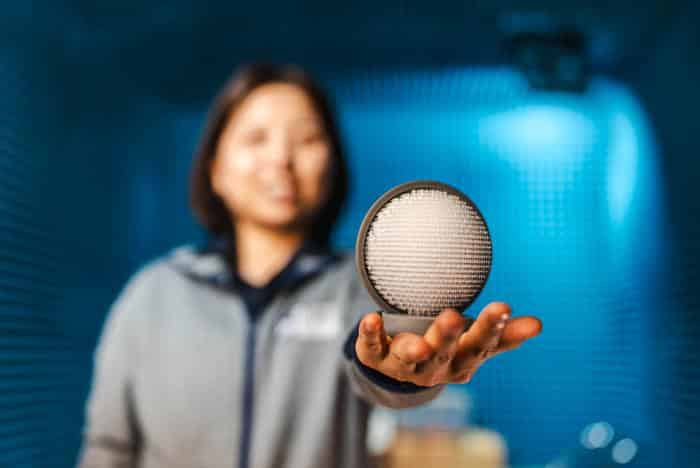

Roughly the size of an orange, EyeDAR is designed to work with the radar systems already built into many autonomous and advanced driver-assistance vehicles. By sitting above the street and off to the side, the device can catch radar reflections that a car’s own sensors miss, then send that information back to the vehicle in real time.

The goal is to reduce dangerous blind spots — like a pedestrian stepping out from behind a truck, a cyclist approaching at an odd angle or a car inching forward from a cross street — especially in busy urban areas and in bad weather.

Current camera- and laser-based systems can struggle when conditions are less than ideal.

“Current automotive sensor systems like cameras and lidar struggle with poor visibility such as you would encounter due to rain or fog or in low-lighting conditions,” Cho, who works in the lab of Ashutosh Sabharwal, Rice’s Ernest Dell Butcher Professor of Engineering and professor of electrical and computer engineering, said in a news release. “Radar, on the other hand, operates reliably in all weather and lighting conditions and can even see through obstacles.”

Caption: Kun Woo Cho holding an EyeDAR sensor prototype in the anechoic chamber where it is being tested.

Credit: Photo by Jared Jones/Rice University

Cho presented the work at HotMobile, the International Workshop on Mobile Computing Systems and Applications, held in Atlanta in late February.

Radar works by sending out radio waves and listening for the echoes that bounce back from objects. But only a small fraction of the signal returns to the source. Most of it scatters in other directions, carrying useful information that a single radar unit on a car will never see.

EyeDAR is designed to sit where those “lost” signals go.

Mounted on existing infrastructure such as traffic lights, stop signs or streetlights, the sensor captures stray radar reflections and figures out where they came from. It then relays that directional information back to the vehicle that sent the original radar pulse.

“It is like adding another set of eyes for automotive radar systems,” Cho added.

To do this without bulky hardware or heavy computing, the Rice team turned to biology for inspiration. EyeDAR’s design echoes the human eye, with two main parts working together.

On the front is a 3D-printed Luneberg lens made from resin. Like the eye’s lens, it focuses incoming signals from any direction onto a specific point on the opposite side. Behind it sits a ring of antennas that acts like a retina, detecting where the focused signal lands and thus determining its direction.

Instead of relying on large antenna arrays and complex software to calculate angles, EyeDAR uses its physical structure to do much of the work. The lens itself performs what engineers call “analog computing,” shaping and routing the waves before they ever reach a processor.

“Our lens consists of over 8,000 uniquely shaped, extremely small elements with a varying refractive index,” added Cho.

By carefully arranging those tiny elements, the researchers created a lens that bends and channels incoming radar waves in a controlled way, steering them to the right spot on the antenna array. In tests, this approach allowed EyeDAR to determine the direction of targets more than 200 times faster than traditional radar designs, according to Rice.

Speed and efficiency matter because direction finding is one of the most power-hungry and data-intensive parts of radar processing. Offloading that task to the hardware itself could make it practical to deploy many sensors across a city without huge energy or computing costs.

EyeDAR also communicates in an unusual way. Instead of sending out its own radar pulses, it listens for the radar from passing vehicles and then modulates the reflections.

The device rapidly switches between absorbing incoming waves and reflecting them back in a pattern that encodes digital information — essentially turning the reflected signal into a data stream that the car’s radar can read.

“Like blinking Morse code,” Cho added. “EyeDAR is a talking sensor ⎯ it is a first instance of integrating radar sensing and communication functionality in a single design.”

Because EyeDAR does not need to transmit its own radar, it can run on very low power. That makes it cheaper and easier to install in large numbers, potentially turning ordinary intersections into smart hubs that help coordinate traffic and protect vulnerable road users.

While the team is focused on autonomous vehicles, the technology could extend far beyond cars. Similar sensors might be built into delivery robots, drones or even wearable devices, giving them better awareness of their environment. Networks of EyeDAR units could also share information with one another, allowing each device to “see” well beyond its own line of sight.

For Cho, the project is also a statement about where computing is headed. As robots, cars and other autonomous systems move into everyday spaces and interact directly with people, she argues that clever physical design will need to complement advances in artificial intelligence and software.

“EyeDAR is an example of what I like to call ‘analog computing,’” Cho added. “Over the past two decades, people have been focusing on the digital and software side of computation, and the analog, hardware side has been lagging behind. I want to explore this overlooked analog design space.”

Next steps include refining the design, testing EyeDAR in more real-world traffic scenarios and exploring how many sensors would be needed — and where they should be placed — to make a meaningful difference in safety.

If the concept scales, tomorrow’s self-driving cars may not be navigating alone. They could be part of a larger, cooperative sensing network, with the road itself quietly watching, thinking and helping them steer clear of danger.

Source: Rice University